Neural Network Tutorials - Herong's Tutorial Examples - v1.22, by Herong Yang

Impact of Training Set Size

This section provides a tutorial example to demonstrate the impact of the training set size. The training must be large enough to represent the pattern of the entire sample set in order to get an accurate solution.

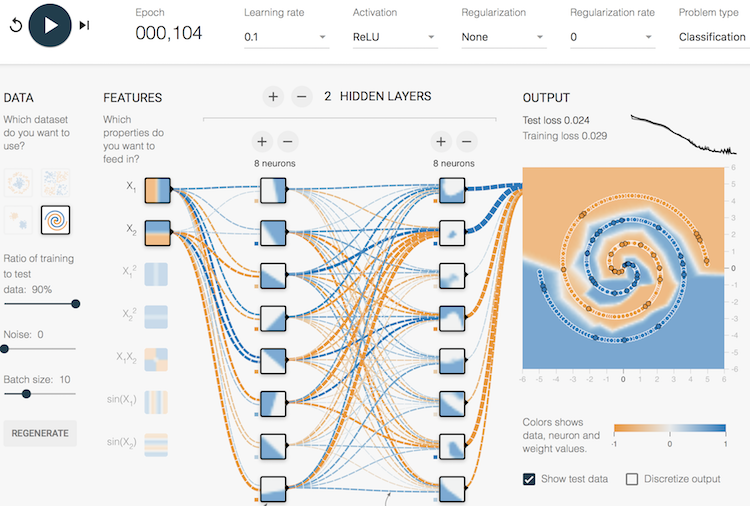

In the previous tutorial, we learned that a neural network with 2 hidden layers of 8 neurons in each layer is able to problem a good solution to the complex classification problem with the Deep Playground. The solution was obtained using 90% of samples as the training set.

In this tutorial, let's find out what will happen if we reduce the size of the training set.

1. Continue with the previous tutorial.

2. Reduce the neural network to 2 hidden layers with 8 neurons in each layer.

3. Keep "Ratio of training to test data" to 90%. Only 10% of the data is left for testing.

4. Play the model again. You should see a good solution after about 100 epochs.

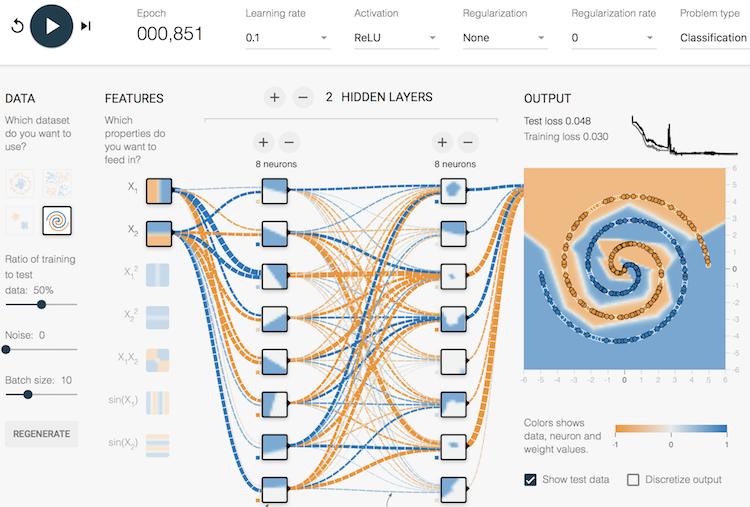

5. Reduce "Ratio of training to test data" to 50%. So we are using half of the samples for training. Play the model again. The model can still reach a good solution. But it takes a little bit longer, more than 500 epochs.

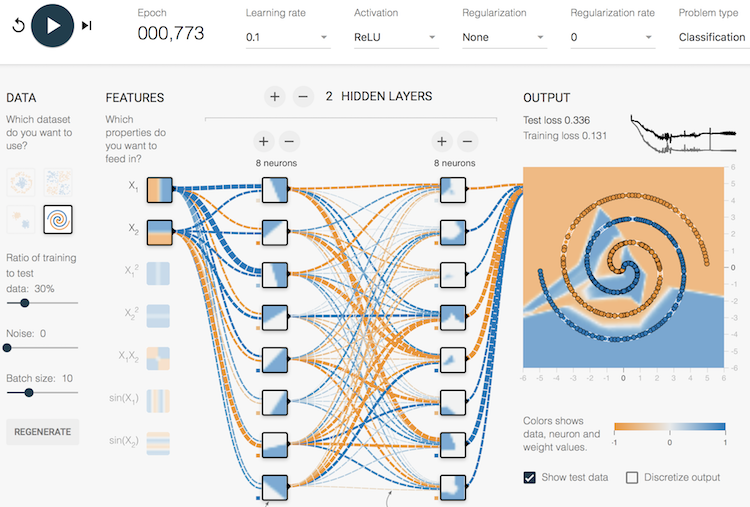

6. Reduce "Ratio of training to test data" to 30%. Play the model again. The model can still reach a solution according to the training set, with a training loss of 0.131. But the solution accuracy on the test set is dropped significantly with a test loss of 0.336. This drop in accuracy is caused by the reduce size of training set. A smaller training set is not able to provide a good pattern to cover the test set.

6. Reduce "Ratio of training to test data" to 10%. Play the model again. The model can still reach a solution according to the training set, with a training loss of 0.131. But it failed completely on the test set with a test loss of 0.473. Remember any dummy solution can give a test of loss of 0.5. So 10% of samples fails to represent the pattern of the entire sample set completely.

Conclusion, for a complex problem, the training must be large enough to represent the pattern of the entire sample set in order to get an accurate solution from a neural network.

Table of Contents

►Deep Playground for Classical Neural Networks

Impact of Extra Input Features

Impact of Additional Hidden Layers and Neurons

Impact of Neural Network Configuration

Impact of Activation Functions

Building Neural Networks with Python

Simple Example of Neural Networks

TensorFlow - Machine Learning Platform

PyTorch - Machine Learning Platform

CNN (Convolutional Neural Network)

RNN (Recurrent Neural Network)

GAN (Generative Adversarial Network)